As robots continue to evolve and adopt increasingly human-like features, a curious psychological phenomenon resurfaces with greater intensity—the uncanny valley. This term refers to the unsettling feeling people experience when a robot looks almost, but not quite, human. While engineers and designers have long believed that making robots appear more human would foster trust and acceptance, new research and technological milestones are challenging that assumption.

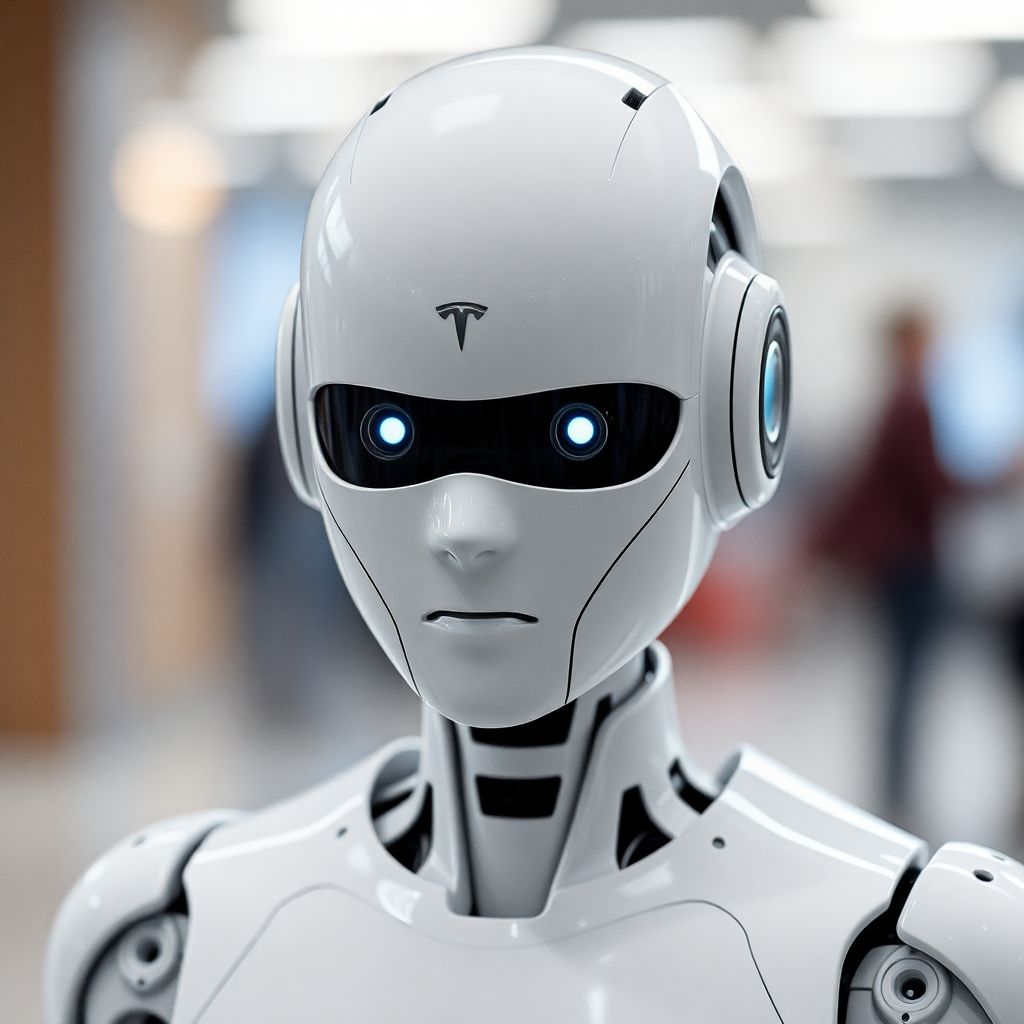

Recent advancements in robotics have brought machines like Tesla’s Optimus, Figure 02, and Unitree’s G1 closer than ever to human appearance and behavior. But instead of delighting audiences, these machines often evoke discomfort—even fear. The latest example comes from a Chinese robotics company, Aheadform, which recently showcased its hyper-realistic robotic head known as Origin M1. This android can blink, nod, and replicate nuanced facial expressions with astonishing accuracy. However, instead of admiration, the robot drew widespread unease. A video demonstration of Origin M1 went viral, amassing over 400,000 views and sparking a wave of reactions describing the robot as “creepy” and “too real.”

The reaction underscores a critical psychological boundary: the closer a robot gets to looking human—without fully crossing into realistic territory—the more likely it is to elicit revulsion rather than reassurance. Researchers have found that while people initially feel safer and more at ease with robots that exhibit familiar, friendly traits, there’s a tipping point. When robots become almost indistinguishable from humans, they stop being endearing and start becoming eerie.

This discomfort is rooted in an evolutionary response. Humans are finely tuned to detect subtle cues in faces and expressions. When a robot mimics these cues imperfectly—like slightly unnatural blinking or oddly timed emotional expressions—it violates our expectations. The result is cognitive dissonance: our brains perceive something as human-like, but something isn’t quite right. That gap between perception and reality is what defines the uncanny valley.

The phenomenon isn’t new. It was first identified in the 1970s by Japanese roboticist Masahiro Mori, who observed that as robots gain more human characteristics, there is a sudden dip in emotional response—a valley—before acceptance rises again if the robot becomes indistinguishably human. However, as artificial intelligence and robotics advance, the stakes become higher. These machines are no longer confined to labs or amusement parks. They’re entering homes, hospitals, and workplaces.

Humanoid robots are now being developed for roles ranging from elderly care to customer service. In theory, a human-looking robot could provide more empathetic interaction, especially for individuals experiencing loneliness or isolation. But if the robot’s appearance or behavior triggers unease, it may do more harm than good. Trust, which is crucial in these environments, can easily be undermined by a single unnatural gesture.

Designers face a complex dilemma: how to balance functionality, familiarity, and emotional comfort. Some experts suggest that robots should embrace a stylized, non-human appearance—like animated characters or abstract designs—to avoid the uncanny valley altogether. Others argue that perfecting realism is the only way to overcome the discomfort, by eventually making robots indistinguishable from humans.

The debate extends beyond aesthetics. It touches on questions of ethics, identity, and the future of human-machine interaction. If a robot can convincingly simulate emotions, does that imply consciousness? Should such machines be granted rights or protections? And how will society define what it means to be “human” when machines can replicate not just our movements, but our micro-expressions and emotional responses?

Public perception also plays a significant role in how these technologies evolve. Media portrayals of humanoid robots—ranging from helpful companions to malevolent androids—shape expectations and fears. As real-world robots begin to mirror their fictional counterparts more closely, the line between imagination and reality continues to blur.

Moreover, cultural differences impact how people react to humanoid robots. Research suggests that individuals in countries like Japan or South Korea, where robots are more normalized, tend to have less aversion to humanlike machines. In contrast, Western audiences often exhibit stronger reactions to robots that enter the uncanny valley. This cultural lens must be considered when designing robots for global markets.

Another layer to this issue is the integration of artificial intelligence with hyper-realistic designs. As AI becomes more sophisticated in interpreting and responding to human behavior, the illusion of empathy becomes stronger. If a robot appears human and reacts appropriately, users may form emotional attachments or ascribe intentions that the machine doesn’t actually possess. This could lead to manipulation, especially among vulnerable populations.

To navigate these challenges, some developers are exploring transparency in design. That means clearly signaling to users that a machine is artificial, even if it behaves in a human-like way. Subtle visual cues—such as unconventional skin tones, robotic textures, or stylized features—can preserve functionality while avoiding deception.

In conclusion, while the push toward realism in robot design aims to foster comfort and connection, it often has the opposite effect. As machines creep closer to human likeness, they risk triggering deep-seated psychological responses that undermine trust. For robotics to fulfill their promise in society, designers must tread carefully, balancing innovation with emotional intelligence and ethical foresight. The uncanny valley is not just a design challenge—it’s a mirror reflecting our deepest fears, hopes, and questions about what it means to be human.